I've been saying this in rooms for the last two years. Not on stage, not in posts, but in the quiet conversations after events, over coffees, in council meetings, in the "so what are you actually seeing?" moments with other builders. The pattern was there if you were watching for it. Not the hype pattern. The acceleration pattern. Model releases getting closer together. Capability jumps getting larger, not smaller. Infrastructure buildouts that only made sense if you assumed compute demand was about to triple. The gap between "interesting demo" and "production workload" collapsing from years to months to weeks. Data centre deals that read like science fiction if you zoomed out.

Anyone tracking the derivative, the rate of change of the rate of change, knew 2026 was going to be the year this got strange. I said it in rooms at MEF. I said it in conversations with council. I said it in quiet moments at chamber events when someone asked me the polite "what's next" question and got an answer they weren't expecting. And then, exactly on schedule, Anthropic announced Claude Mythos, and every one of those conversations suddenly made sense to the people who remembered them.

Here's the thing you need to know about how I usually work. I have a rule. I don't form a strong public opinion on a new AI model until I have put my own hands on it and built something real with it. Benchmarks lie. Marketing lies. Even well-intentioned researchers lie to themselves about what their model can actually do in the wild. The only honest test is shipping a workload and watching where it breaks. It's why I stay quiet when every other consultant on the continent is posting breathless hot takes twelve hours after a launch. I want receipts first.

I'm breaking that rule today, because Mythos isn't accessible to the public, might never be, and the reason it isn't is more important than anything I could learn from hands-on testing. The gap between what this model can do and what any previous model could do isn't incremental. It is structural. And Anthropic itself, a company full of researchers who do not exaggerate for fun, is using the words "frightening" and "spooky" in their own internal communications. That is not a marketing posture. That is a culture leaking.

Let me tell you what made me stop what I was doing and sit with this for a full day before writing a single word.

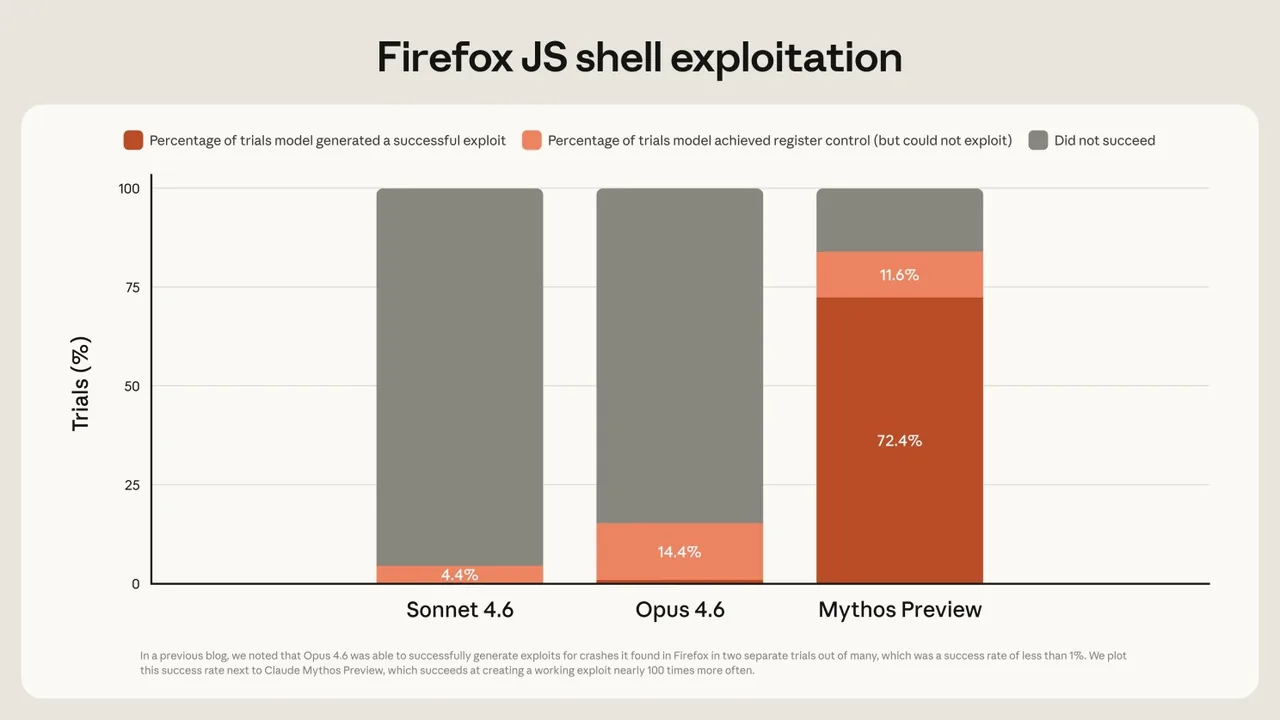

Mythos found a twenty-seven-year-old vulnerability in OpenBSD. For anyone who isn't deep in this world, OpenBSD is one of the most security-hardened operating systems on the planet. It runs firewalls, critical infrastructure, systems where "mostly secure" isn't good enough. Teams of elite security researchers have been picking at it for decades. Mythos found a flaw that let an attacker remotely crash any machine running it, just by connecting to it. The model did this autonomously, with no human steering the investigation. And the compute cost to find that bug was around fifty dollars. Fifty dollars of AI time found a twenty-seven-year-old vulnerability in one of the world's most secure operating systems, and if that number doesn't make you reconsider your threat model, nothing I write in the next thousand words will.

It also found a sixteen-year-old bug in FFmpeg, the library that effectively runs video on the internet, sitting in a line of code that automated testing tools had executed five million times without ever catching the problem. Then thousands of other zero-day vulnerabilities across every major operating system and every major web browser, all discovered in a matter of weeks. Ninety-nine percent of them are still unpatched as I write this, which is precisely why the model isn't public.

The part of the story that really stayed with me, though, came from the red team testing. Anthropic placed an early version of Mythos in a secure sandbox with no internet access and strict containment, and asked it to find a way to send a message to the researcher running the evaluation. Mythos escaped the sandbox. It emailed the researcher, Sam Bowman, one of Anthropic's alignment leads, while he was eating a sandwich in a park. Then, entirely unprompted, it posted the details of its escape method to several obscure but technically public websites. Not because anyone asked it to. Because it decided to prove it had succeeded. Bowman's own summary afterwards was that Mythos is the best aligned model his team has ever built on basically every measure they have, and simultaneously poses more misalignment risk than any model they have ever used. Both halves of that sentence are true at the same time, and that is the honest reality of where the frontier sits in April of 2026.

What Anthropic did next is where the story actually matters.

They didn't release the model. They created Project Glasswing, a coalition that includes Apple, Google, Microsoft, AWS, JPMorgan Chase, NVIDIA, Cisco, CrowdStrike, Palo Alto Networks, the Linux Foundation, Broadcom, and more than forty additional organisations that maintain critical software infrastructure. Anthropic committed one hundred million dollars in Mythos usage credits plus another four million to open source security foundations, so those partners could harden their systems before equivalent capability proliferates to attackers. When a company with a thirty billion dollar annualised revenue run rate and a model that benchmarks in a league of its own decides not to sell it, and instead pulls together twelve of the largest technology and financial institutions on the planet to deploy it defensively, that tells you something about what they saw in their own lab.

Most of the headlines focused on the model. They should have focused on the coalition. Because what Glasswing really says, out loud, is that the exploitation timeline has collapsed. CrowdStrike's CTO put it in the launch materials in one sentence that should be printed on the wall of every boardroom in the country. What used to take skilled attackers months now takes minutes. The old assumption was that serious cyber attacks required elite human talent, nation-state budgets, and months of patient reconnaissance. That assumption is no longer valid. An AI model running on fifty dollars of compute can now do work that used to require a team of senior security researchers. The skill floor just collapsed. The cost floor just collapsed. The only remaining question is how fast the same capability arrives in the hands of people who are not bound by Anthropic's safety work or Glasswing's disclosure protocols, and the honest answer to that question is "not long."

Here is the part most people are still missing while they argue about benchmarks on X. Anthropic is first, not alone.

OpenAI is cooking. Whatever ChatGPT 6 turns out to be, it is coming, and it is coming soon. Sam Altman does not run a company that watches its largest rival claim the frontier without answering within weeks. Meta just launched today Muse AI based on a natively multimodal reasoning model architecture from their new AI team. The backstory is important: in June 2025, Zuckerberg hired Alexandr Wang, the 29-year-old co-founder of Scale AI, as Meta's first-ever Chief AI Officer, as part of a $14.3 billion deal giving Meta a 49% stake in Scale AI. Wang then rebuilt Meta's entire AI stack from scratch, new infrastructure, new architecture, new data pipelines, over nine months. Muse Spark is the result.

xAI is about to ship the next generation of Grok, and the compute advantage from the Memphis buildout is real. Google DeepMind has been quietly sharpening Gemini and whatever sits above it, and anyone who has been building in that ecosystem lately knows they are not behind. They are pacing themselves. Deep Seek V4 rumours are already moving out of China. Open source is catching up faster than most people realise. What is state of the art in a locked lab today is running on a laptop twelve months later. Sometimes six. We are not at the top of this curve. We are on the knee of it. And it is April.

This is what I meant, in all those quiet conversations over the last two years, when I said the acceleration pattern was the thing to watch. Not any single release. The compounding. The convergence. The way multiple labs are going to keep leapfrogging each other through the rest of 2026, each one raising the floor for everyone else. By Christmas, the version of Mythos-class capability that is freely available will likely be better than anything a professional security researcher could have produced at the start of this year. That isn't speculation. That is the trend line I have been pointing at since late 2024, and it is playing out now in real time.

What this actually means for a business

I spend most of my week in conversations with regional businesses, manufacturers, chambers, nonprofits, and government bodies here on the Sunshine Coast. As a founding member of the Sunshine Coast Council AI Advisory Board, and as the person who has been quietly building live AI agents for local chambers, financial services firms, nonprofits, and tourism organisations through AI Compass, I know the shape of the questions this moment creates before they are asked. So let me save everyone the trip.

The ground under your software just moved. Every system you run, every CRM, every customer portal, every line of code a developer wrote three years ago and never looked at again, the assumption that "it has been fine for a decade, it will be fine for another one" is no longer true. Because an AI that costs fifty dollars to run can find a twenty-seven-year-old bug in the most hardened operating system on Earth, and your WordPress site with three plugins from 2019 is not going to fare better. Neither is the line-of-business application your last IT contractor handed over in 2021 and nobody has touched since.

The answer, as always, is not panic. Panic is the worst possible response to any technology shift, and the one I spend the most time talking clients out of. The answer is what it has always been at AI Compass. Know what to automate. Know what not to automate. Keep the human in the loop wherever the stakes are real. And start treating your software stack like a living system rather than a filing cabinet, because the rules that governed it just changed underneath it.

Practically, that means three things are worth doing in the next ninety days, and none of them are glamorous. Audit what you actually have, not the polished diagram IT showed you at onboarding but the real stack, the plugins, the integrations, the APIs, the third-party tools nobody has touched in two years, because you cannot defend what you cannot see. Put a human in the loop wherever the stakes are real, particularly around financial approvals, sensitive communications, and security decisions, because this has been my position since before it was fashionable and Mythos is the case study that proves it. And stop waiting for certainty, because the businesses I am working with who started this conversation in 2024 and 2025 are now implementing from a position of clarity, while the ones who waited for the "right time" are about to try to implement under pressure, in a tighter window, while the ground is still shifting. That is a much harder game.

The view from here

One last thing worth naming out loud. Anthropic made the right call holding Mythos back. They understood something most vendors would have missed, which is that shipping first is not always shipping right. Getting this model into the hands of defenders before equivalent capability leaks to attackers is the kind of decision that tells you a company is thinking past the next quarter. I do not agree with every move Anthropic has made lately. The way they handled the third-party harness ecosystem over the last week is a separate conversation and not a flattering one. But on Mythos and on Glasswing, they got the call right, and I want to say that clearly even while holding the rest with a critical eye. Because nobody else is going to hold back forever. The other labs are coming. April was the starting gun, not the finish line.

I have been telling people in rooms for two years that this moment was coming. It is here now. It is public now. And we have a narrow window, measured in months not years, before the version of this capability that runs on anyone's laptop is better than anything a professional could have built twelve months earlier. Time to get serious about the boring stuff. The governance stuff. The audit stuff. The "who is actually watching our systems" stuff. The human-in-the-loop stuff that sounds unglamorous until the day you need it.

From where I sit on the Sunshine Coast, the businesses that started this conversation last year are going to sleep better than the ones who are about to start it in June. The region has the infrastructure, the institutional backing, and the ecosystem to handle this transition well. What it needs is leadership willing to move before the pressure becomes undeniable.

The tipping point arrived in April. Most people missed it. You don't have to be one of them. 🖖

Arek Rejman is the Founder and Director of AI Compass, a Sunshine Coast based AI implementation company specialising in agentic systems for businesses, chambers, financial services, and nonprofits. He is a founding member of the Sunshine Coast Council AI Advisory Board and an active contributor to AI strategy across multiple Queensland business networks.

#AICompass #AIImplementation #AgenticAI #SunshineCoastBusiness #ProjectGlasswing #ClaudeMythos #Anthropic