Visionary Friday | December 12, 2025

TIME's 2025 Person of the Year isn't a person!

Yesterday, TIME Magazine named "The Architects of AI" as Person of the Year for 2025. The cover features Jensen Huang, Sam Altman, Elon Musk, Mark Zuckerberg, Lisa Su, Dario Amodei, Demis Hassabis, and Fei-Fei Li, digitally painted onto that famous 1932 "Lunch atop a Skyscraper" photograph. Eight people are sitting on a steel beam, building something that will reshape everything below them. The symbolism isn't subtle. And it shouldn't be. TIME's editor put it bluntly: "This was the year when artificial intelligence's full potential roared into view, and when it became clear that there will be no turning back or opting out." No turning back. No opting out. Heavy words. And probably accurate. But here's what I keep thinking about, and it's not what most people are discussing. Everyone's analysing how much AI has changed. The models got smarter. Claude now writes 90% of its own code. Cursor hit $1 billion in revenue faster than almost any startup in history. Companies are throwing $370 billion at data centres this year alone. The technology is moving at a pace that makes Moore's Law look leisurely. All true. All impressive. All are missing what I think is the deeper story. The real question isn't how much AI has changed in the last few years.

The real question is how much WE have changed?

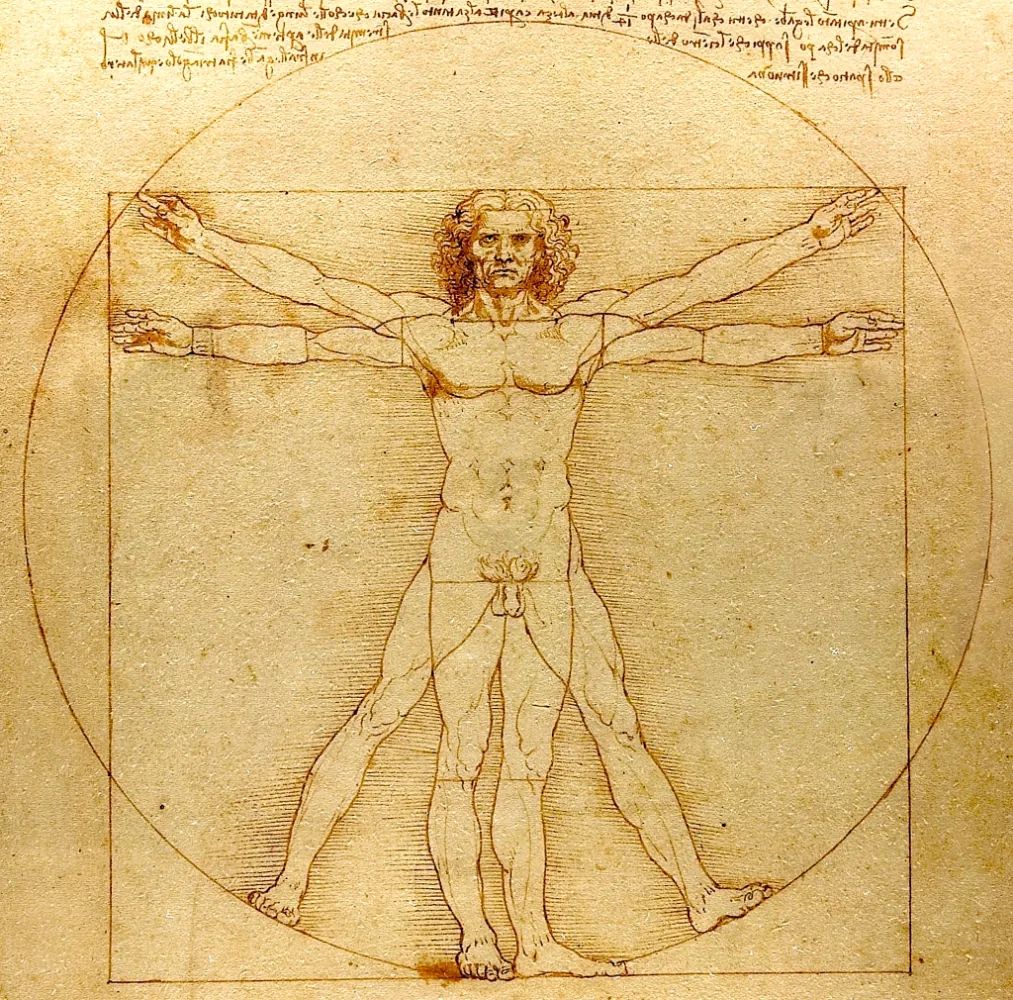

Think about it. We've gone from "that's a clever chatbot" to "maybe this is alien intelligence" in roughly 24 months. Not alien as in little green men. Alien as in fundamentally OTHER. Intelligence that didn't evolve. Intelligence that doesn't think like us. Intelligence that we created but don't fully understand. Some serious researchers are now saying AI might be "the last invention humanity ever needs to make." I'm not here to tell you whether that's utopian or terrifying. Probably both, depending on the day. I'm not interested in the good vs bad judgment game. I'm interested in something else entirely. What happens to humanity when we're no longer playing the game of survival? Many of you know I'm a Star Trek fan. And I should clarify what that actually means, because it's not about the soap opera storylines or the romantic subplots or the special effects that aged like milk in the Queensland summer. Seriously, go back and watch the original series. It's gloriously terrible. I'm a fan of the philosophy underneath. Gene Roddenberry built an entire universe on one radical premise: What happens when humanity finally gets liberated from the survival game and gets to play the exploration game instead? For virtually all of human history, those two games were inseparable. You explored BECAUSE you needed to survive. You crossed oceans to find resources. You climbed mountains to escape threats. You pushed into unknown territories because the known ones couldn't sustain you anymore.

But what if AI changes that equation?

What if, for the first time in our species' history, we could explore purely for the sake of exploring? Create purely for the joy of creating? Discover simply because discovery is worth doing?

What if the engines that drove so much of human history, the greed, the power hunger, the zero-sum competition, could finally be devalued? Not eliminated, we're still human, but genuinely devalued. Made unnecessary. Rendered obsolete by abundance rather than defeated by willpower.

That's the possibility I find myself thinking about at 2 AM.

Here's what Roddenberry actually got right that most people miss. In the Federation, the big breakthrough wasn't warp drive or phasers or transporters. The real revolution was internal. Humanity evolved past the psychological architecture that served us during scarcity but poisoned us during abundance. In that universe, people don't work because they need to survive. They work because they want to grow, to contribute, to become better versions of themselves. Captain Picard doesn't command the Enterprise for a salary. He does it because exploration and service give his life meaning. The Ferengi exist in Star Trek specifically as a mirror, showing what happens when a species achieves technological advancement but remains psychologically stuck in the acquisition game.

And the Borg? They're the cautionary tale of what happens when technology absorbs humanity rather than liberating it. Assimilation without elevation. Power without purpose. That's the choice Star Trek keeps putting in front of us: Do we use our technological breakthroughs to transcend our limitations, or do we just amplify them? AI is forcing that exact question right now, and we don't get to phone a friend for the answer.

That's what I'm working toward. That's what I pray for and support with my actions. Not blind optimism. I've seen enough botched AI implementations and overhyped promises to keep my feet firmly planted. But genuine hope, backed by deliberate effort.

Now, here's where I want to push the conversation in a different direction.

TIME called these eight people "The Architects of AI." They kept the "Person of the Year" title but gave it to a group building something that increasingly doesn't fit our existing categories.

That choice says something about where we are psychologically.

We've stopped treating AI as just a tool. That shift already happened, probably sometime in 2024, and most people didn't consciously notice it.

But what have we started treating it as instead?

A servant? A partner? A colleague? An oracle? A child we're raising? A force of nature we're trying to harness?

I talk to business owners every week who are trying to implement AI solutions. And what strikes me isn't the technology questions they ask. It's the relationship questions they're wrestling with underneath.

"How much should I trust it?" "When does delegation become abdication?" "Is it helping my team or replacing them?" "What does it mean that my customers prefer talking to the AI?"

These aren't technical questions. These are questions about identity, purpose, and what work actually means when a non-human can do significant chunks of it.

We are not just adopting a technology. We are renegotiating our self-understanding as a species.

And that renegotiation is happening whether we consciously engage with it or not. So here's what I genuinely want to know from my LinkedIn community. Have we entered the personification phase of AI? Are we now relating to these systems as entities rather than tools, even if we intellectually know they're "just" algorithms?

Is this evolution healthy, dangerous, or simply inevitable regardless of what we think about it?

And the bigger question, the Star Trek question: If AI genuinely does start freeing humanity from the survival game, what game will we choose to play instead? Do you trust us to choose wisely?

I know where I stand. I know what I'm building toward. But I'm genuinely curious about your perspective. Not the polished professional answer. The honest one. The one you think about when you're not performing for your network.

Drop it in the comments. Challenge my thinking. Tell me I'm being naive or not naive enough. Let's have the real conversation.

Because here's the thing I'm absolutely certain about:

The answers to these questions won't come from AI.

They'll come from us.

And maybe that's exactly as it should be.